What you need to know about developing GPT3 applications

OpenAI removed the API waitlist for its flagship language model, GPT3, last week. Any developer who meets the OpenAI API Terms of Use can now apply and integrate GPT3 into their applications. Since the beta release of GPT3, developers have built hundreds of applications based on the language model.

OpenAI removed the API waitlist for its flagship language model, GPT3, last week. Any developer who meets the OpenAI API Terms of Use can now apply and integrate GPT3 into their applications. Since the beta release of GPT3, developers have built hundreds of applications based on the language model.

However, creating a successful GPT3 product has its own challenges. You need to find a way to leverage OpenAI's advanced deep learning models to deliver maximum value to your users while maintaining scalability and cost-effectiveness of your operations.

Fortunately, OpenAI provides many options to help you get the most out of your costs when using GPT3. Here's what people developing applications with GPT3 have to say about best practices.

Models and tokens

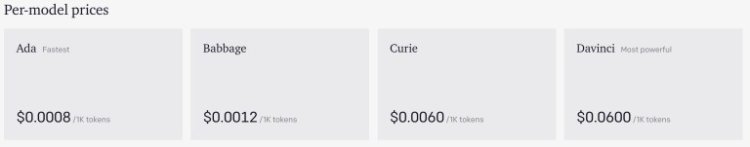

OpenAI offers four GPT-3 versions: Ada, Babbage, Curie and Davinci. The Ada is the fastest, cheapest and lowest performing model. Davinci is the slowest, most expensive and most efficient. Babbage and Curie are between the two extremes. The

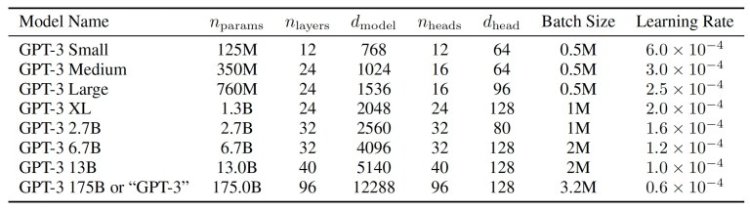

OpenAI website does not provide detailed architectural details for each model, but the original GPT3 documentation contains a list of different versions of the language model. The main difference between the models is the number of parameters and levels, from 12 levels and 125 million parameters to 96 levels and 175 billion parameters. Adding layers and parameters increases the model's ability to learn, but also increases processing time and cost.

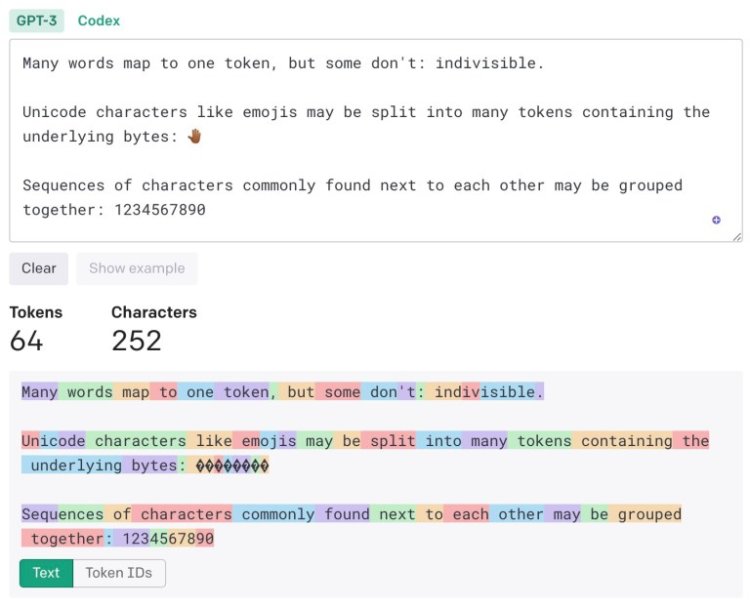

OpenAI calculates the price of the model based on the token. According to OpenAI, "one token is usually equivalent to ~4 characters of text for plain English text. Translated it is roughly a word (i.e. 100 tokens ~ = 75 words)."

an example from OpenAI’s Tokenizer tool :

In general, if you use good English (avoid jargon, use simple words with multiple syllables, etc.), you will improve your vocabulary-to-word ratio. In the example below, every second word except "GPT3" counts as one token.

One of the strengths of GPT3 is its discreet training capabilities. If you don't like the model's response to a clue, you can guide the model by providing a longer clue with correct examples. These examples work as real-time training and improve GPT3 outcomes without the need for parameter readjustment.

. It is worth noting that OpenAI charges for the total number of tokens in the input prompt and the output tokens returned by GPT3. So long prompts with small tutorials add to the cost of using GPT3.

Which model should you use?

The difference in cost between the cheapest GPT3 model and the most expensive GPT3 model is 75x, so it is important to know which option is best for your application. Matt Schumer, co-founder and CEO of

OthersideAI, developed an AI-powered writing tool using GPT3. OthersideAI's flagship product, HyperWrite, uses GPT3 for text generation, autocompletion, and paraphrasing.

Sumer told TechTalks to start by considering the complexity of the intended use case when choosing between different GPT3 models.

“If it's as simple as binary classification, you can start with Ada or Babbage. For very complex tasks, such as conditional creation that require high product quality and reliability, we start with Davinci,” he said.

Difficult Sumer starts with the largest Da Vinci model. Then he moves on to a smaller model.

“If you play da Vinci, you try to change the hint to Curie. This usually means adding more examples, clarifying structure, or both. If it works for Curie, I'll go to Babbage and then to Ada."

Some applications use multi-level systems with combinations of different models.

"For example, if it's a generative operation that requires some classification as a preceding step, you can use Babbage for the classification and Curie or Davinci for the generative step," he said. "After some use, you will feel what can be useful for different use cases."

Paul Bellow, author and developer of LitRPG Adventures, used Davinci for his GPT3-based RPG content creator.

Bellow told TechTalks, "We wanted to get the best results that we could tweak later." “Davinci is the slowest and most expensive product, but higher product quality was important to me during this stage of development. You've spent the premium, but now there are over 10,000 households available for further customization.

Datasets have value." (More on fine-tuning later.)

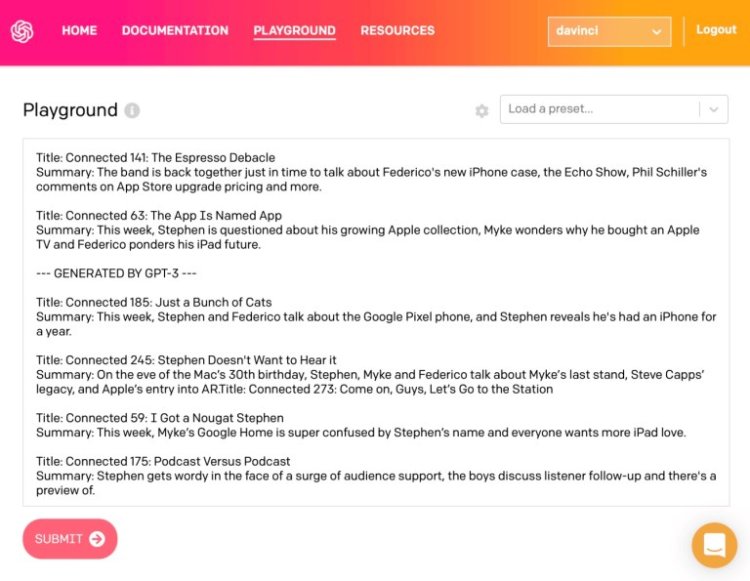

Bellow notes that the best way to see if different models work for a problem is to try out hints for multiple GPT3 models yourself. You say that you run multiple tests on the Playground, a tool ( OpenAI charges you for using the Playground.

“In most cases, well-thought-out cues can yield good content from the Curie model. It just depends on the use case, Bellow said.

Balancing costs and quality

When choosing a model for your application, you need to balance cost and cost. Choosing a high-performance model can provide better product quality, but improved results cannot justify the price difference.

As Schumer said, "You have to build your business model around the product that supports the engine you use." “If we want our users to get a quality product, Davinci is worth it. Costs can be passed on to users. If you're making a massively free product and your users are happy with the mediocre results, you can use a smaller engine. It all depends on the goals of the product." According to Schumer,

OthersideAI has developed a solution that uses a combination of different GPT3 models for different use cases. Paid users leverage the larger GPT3 model, while free users use the smaller model.

For LitRPG Adventures, quality is paramount, so Bellow initially followed the Davinci model, he used the default Davinci model with one or two hints, which increased the cost but provided high-quality GPT3 prints

“The Davinci OpenAI API model is currently slightly more expensive, but the cost is likely to decrease over time,” he said. “What gives us the flexibility now is the ability to fine-tune our Curie and Junior models or Da Vinci models with permission. This allows us to lower our cost per generation slightly while maintaining high quality.”

He was able to develop a business model that supports profitability with da Vinci.

“The LitRPG Adventures project is not very profitable, but it is paying off and is almost scalable. I am ready to do it,” he said.

GPT-3 alternatives

OpenAI received a lot of criticism for its decision not to release GPT3 as an open source model. After that, other developers released alternatives to GPT3 and made them generally available. One very popular project is EleutherAI's GPTJ. Like other open source projects, GPTJ requires the technical effort of an application developer to set up and run. They also do not benefit from the ease of use and scalability provided by hosting and tuning models in the Microsoft Azure cloud.

However, the open source model is nonetheless useful and worth considering if you have your own talent for customization and it suits your application's needs.

“GPTJ is not like full GPT3, but knowing how to work is useful. It's exponentially more difficult to get complex hints that work in GPTJ compared to Davinci, but it's possible for most use cases,” Schumer said. “You can't get the same super high quality product, but with a little time and effort you can get a decent product. Also, these models may have lower operating costs, which is a big plus given Davinci's cost. We've successfully used similar models on Otherside."

"In my experience, it works on about the same level as OpenAI's Curie model," Bellow said. I don't disclose any details, so I think it's pretty much the same as GPTJs and others. I think other players will soon have more options (hopefully)... Competition between suppliers is good for consumers like me."